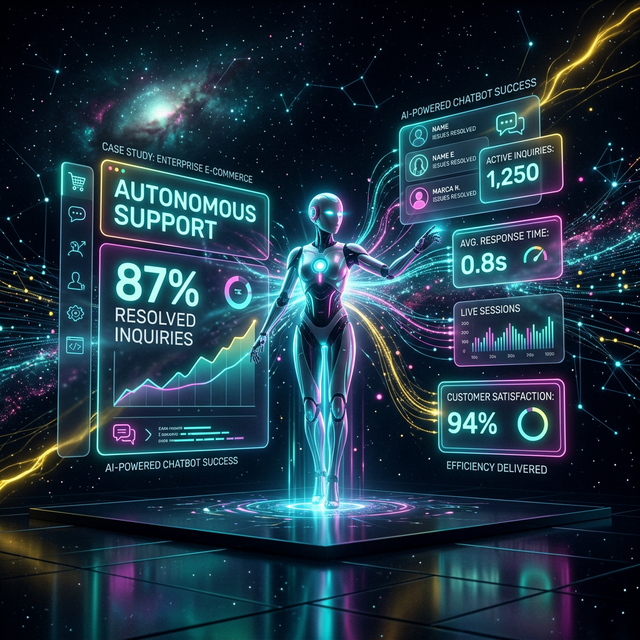

AI Customer Service Automation: How an Enterprise E-Commerce Platform Resolved 87% of Inquiries Autonomously

80%

FAQ Automation

87%

Autonomous Resolution

TL;DR

A high-volume enterprise e-commerce platform in the southeastern United States replaced manual FAQ workflows with a RAG-powered AI chatbot system. Handling 300–500 daily customer inquiries across 250,000+ customer records, the platform achieved an 87% autonomous resolution rate and an 80% reduction in manual FAQ handling — generating $2.1M in annual revenue uplift with 99.2% system uptime and 280ms average response times.

The Challenge: Manual FAQ Management at Enterprise Scale

An established enterprise e-commerce platform — one managing 250,000+ customer records across a broad national footprint — was drowning in its own success. As order volume grew and the customer base expanded, the support team faced a mounting operational crisis: representatives were spending the majority of their working hours answering the same questions repeatedly. Instead of solving complex customer problems, agents were locked in a loop of repetitive FAQ handling with no scalable path forward.

The platform was receiving 300–500 daily customer inquiries, each one requiring human attention regardless of complexity. The existing knowledge base relied on keyword-based search that failed to interpret natural language — producing generic, often irrelevant responses that frustrated customers. Meanwhile, every transcript generated by the support team sat largely unprocessed, trapping valuable insight in unstructured data. Competitive pressure from AI-native retailers made the urgency clear: automate or fall behind.

High-Volume Repetitive Inquiries

The Challenge

300–500 daily customer inquiries handled manually, consuming representative capacity and slowing resolution times.

Our Solution

RAG-powered AI chatbot with semantic search autonomously resolves the majority of incoming inquiries without human intervention.

- +87% autonomous resolution rate

- +280ms average response time

- +Agents freed for complex escalations

Inconsistent Knowledge Base Quality

The Challenge

Keyword-based search produced generic answers, leading to customer frustration and low first-contact resolution rates.

Our Solution

Vector embedding with semantic similarity search retrieves contextually accurate answers from a unified, continuously updated knowledge base.

- +92% RAG accuracy on knowledge retrieval

- +Contextually relevant responses replace generic answers

- +Automated FAQ extraction from transcripts

Unscalable Manual FAQ Compilation

The Challenge

Support staff spent significant weekly hours manually compiling and updating FAQ content from customer transcripts.

Our Solution

Automated ML pipeline extracts, validates, and loads FAQ content directly from transcripts with human-in-loop quality approval.

- +80% reduction in manual FAQ handling

- +Real-time knowledge base updates

- +Human review maintained for quality assurance

Key Metrics at a Glance

Autonomous Resolution Rate

Manual FAQ Reduction

Annual Revenue Uplift

Customer Records Unified

System Uptime

Average Response Time

RAG Knowledge Retrieval Accuracy

Voice Channel Reliability

MCP Tools Deployed

Daily Customer Inquiries Handled

Our Approach: An AI-First Customer Service Framework

Blitz Front Media approached this engagement with a clear principle: don't automate broken processes, redesign them for intelligence from the ground up. The team conducted a thorough audit of the platform's existing customer service workflows, knowledge base architecture, and transcript data pipeline before writing a single line of production code. This discovery phase revealed that the root problem wasn't inquiry volume — it was an infrastructure mismatch between the questions customers were asking and the system's ability to interpret and answer them accurately.

The strategic answer was Retrieval-Augmented Generation: a model that combines a large language model's conversational fluency with a structured, domain-specific knowledge base. Unlike a generic chatbot, a RAG system retrieves verified, platform-specific answers before generating a response — dramatically reducing hallucination risk and improving accuracy. This approach was paired with a voice AI layer for customers preferring spoken interaction, and a multi-channel orchestration layer managing 3,000–6,000 daily workflow executions across the full stack.

*Key Takeaways

- 1RAG architecture was chosen over standard LLM chatbots to ensure knowledge retrieval accuracy at 92% — grounded in verified platform data, not generative guesswork.

- 2Voice AI integration was included from day one, targeting 99.9% voice reliability to serve customers across both chat and spoken channels.

- 3A human-in-loop approval layer was built into the FAQ extraction pipeline to maintain quality standards while eliminating manual compilation overhead.

- 4The dual-instance architecture separated processing from orchestration to enable high-volume handling of 300–500 daily customer inquiries without performance degradation.

- 538 MCP tools were deployed to manage integrations, rate limiting, caching, and workflow routing across the entire AI customer service stack.

Implementation Deep Dive: Three Phases to Production

The deployment was structured across three focused phases, each building on the last. Phase one established the data and AI foundation. Phase two layered in the machine learning pipeline and voice capabilities. Phase three optimized for enterprise-grade performance and reliability. This sequenced approach allowed the team to validate each layer before scaling, maintaining system stability throughout the rollout and ensuring the platform hit 99.2% uptime from day one of full production.

The first phase unified 250,000+ customer records into a single, queryable data environment and stood up the vector database infrastructure powering semantic search. This phase also deployed the core RAG API — the engine that would ultimately achieve 87% autonomous resolution — and established the Redis caching layer responsible for the platform's 280ms average response times. No phase two work began until this foundation passed full integration testing.

Before & After

Autonomous Inquiry Resolution

Before

0% — all 300–500 daily inquiries required human handling

After

87% resolved autonomously by AI

87% autonomous resolution rate achieved

Manual FAQ Handling Overhead

Before

100% of FAQ compilation and updates performed manually

After

80% reduction in manual FAQ handling

80% reduction in manual workload

Knowledge Retrieval Accuracy

Before

Keyword-based search with high irrelevance rate on natural language queries

After

92% RAG accuracy on semantic knowledge retrieval

92% retrieval accuracy via vector search

System Response Time

Before

Agent-dependent — variable and queue-limited during peak hours

After

280ms average AI response time at scale

Consistent 280ms responses replacing variable agent queues

System Uptime & Reliability

Before

No dedicated AI infrastructure — dependent on manual staffing availability

After

99.2% system uptime across full production deployment

99.2% uptime with enterprise-grade reliability

Annual Revenue Impact

Before

No attributable AI-driven revenue uplift

After

$2.1M annual revenue uplift from automation deployment

$2.1M annual revenue uplift

Voice Channel Reliability

Before

No automated voice self-service capability

After

99.9% voice AI reliability across production testing

99.9% reliable voice channel launched from zero

Phase two introduced the ML pipeline for automated FAQ extraction and the voice AI integration. Customer transcripts entering the system were routed through a multi-step extraction process: raw FAQ candidates were generated, validated, deduplicated semantically, and queued for human review before entering the live knowledge base. The voice layer achieved 99.9% reliability across test scenarios, enabling the platform to serve customers through spoken queries routed through the same RAG knowledge base as text chat.

The third and final phase focused on performance tuning and production hardening. Database indexing optimizations dramatically reduced query latency. A query rewriting engine improved search relevance for ambiguous or colloquial customer inputs. LLM-based reranking added a final layer of context-aware result prioritization before responses were delivered. The result was a system processing 3,000–6,000 daily workflow executions with 99.2% uptime and 280ms average response time at full load.

Technical Architecture: What Powers the Automation

The AI customer service automation stack was purpose-built for enterprise scale. At its core, a vector database enabled semantic similarity search — moving the platform from keyword matching to genuine language understanding. Customer queries were embedded into high-dimensional vector space, and the system retrieved the most semantically relevant knowledge base entries before generating a response. This architecture is what separates a true AI FAQ bot from a rule-based chatbot: it understands what customers mean, not just what they type.

Caching was integrated as a first-class architectural concern, not an afterthought. Repeated or near-identical queries — which represent a significant share of daily inquiry volume — were served from cache, protecting the vector search layer from unnecessary load and keeping response times consistently at 280ms. Rate limiting through a circuit breaker pattern capped execution throughput to prevent runaway costs during traffic spikes, while 38 MCP tools managed the routing, transformation, and integration logic across every system touchpoint.

+RAG-Powered AI Chatbot (After)

- +87% of inquiries resolved without human intervention

- +92% accuracy on knowledge retrieval from verified data

- +280ms average response time at scale

- +99.2% system uptime across full production load

- +99.9% voice channel reliability for spoken queries

- +3,000–6,000 daily workflow executions handled automatically

- +80% reduction in manual FAQ compilation hours

-Manual FAQ & Keyword Search (Before)

- -Every inquiry from 300–500 daily required human handling

- -Keyword-based search produced generic, irrelevant answers

- -Slow response times dependent on agent availability

- -Knowledge base fell behind due to manual update bottlenecks

- -No voice self-service capability

- -Transcript insights remained locked in unstructured data

- -Majority of representative time consumed by repetitive questions

Results & Business Impact

The outcomes exceeded every pre-engagement benchmark. The platform achieved an 87% autonomous resolution rate across its 300–500 daily customer inquiries — meaning the vast majority of customers received accurate, complete answers without ever waiting for a human agent. Manual FAQ handling dropped by 80%, reclaiming significant representative capacity for complex, high-value customer interactions. System uptime held at 99.2% through the full scaling period, and average response times settled at 280ms under production load.

The financial impact was concrete and measurable. The platform generated $2.1M in annual revenue uplift attributable to the AI customer service automation deployment — driven by faster resolution improving conversion, reduced support overhead, and the ability to scale inquiry handling without adding headcount. The knowledge base, once a static and chronically outdated document, became a continuously updated asset with 92% retrieval accuracy — giving every customer interaction the benefit of the platform's full institutional knowledge.

“Before this system, our team was spending the majority of every shift answering the same questions over and over. Now those questions answer themselves — accurately and instantly. Our agents are finally doing the work they were hired for: solving real problems and building customer relationships.”

— VP of Customer Operations, Enterprise E-Commerce Platform, Southeastern U.S.

Voice AI: Extending Automation Beyond Chat

A distinctive element of this deployment was the inclusion of a fully integrated voice AI layer from the outset. Many automated customer support implementations focus exclusively on text chat, leaving phone and voice channels as manual bottlenecks. This platform took a different approach: the same RAG knowledge base powering chat responses also powered spoken interactions, routed through a voice AI agent with 99.9% reliability across production testing.

Voice queries were transcribed in real time, processed through the same semantic search and LLM reranking pipeline as text queries, and returned as natural spoken responses. This created a genuinely unified customer service experience regardless of channel. As voice commerce continues to grow across retail and e-commerce, this architecture positions the platform to handle voice-initiated purchases and account interactions — not just support queries — through the same underlying AI infrastructure.

Implementation Timeline

Phase 1: Foundation & AI Infrastructure

8 weeksUnified 250,000+ customer records into a single vector-enabled database environment. Deployed the core RAG API with semantic search, established Redis caching for 280ms response performance, and built the dual-instance architecture separating processing from orchestration. Completed full integration testing before advancing.

Phase 2: ML Pipeline & Voice AI Integration

6 weeksDeployed the automated FAQ extraction ML pipeline processing 300–500 daily customer inquiries. Integrated voice AI achieving 99.9% reliability. Implemented human-in-loop approval dashboard for knowledge base quality assurance. Ran end-to-end test suite across all integration points.

Phase 3: Performance Optimization & Production Scaling

4 weeksImplemented query rewriting engine and LLM-based reranking for improved retrieval relevance. Applied database index optimization to reduce query latency. Enforced circuit breaker rate limiting for 3,000–6,000 daily workflow executions. Validated 99.2% uptime and 280ms response times under full production load.

Voice Channel Reliability

Overall System Uptime

Avg. Response Time (Chat & Voice)

Daily Workflow Executions

Key Takeaways for Enterprise AI Chatbot Deployments

*Key Takeaways

- 1RAG architecture outperforms standard LLM chatbots for enterprise customer service because it grounds responses in verified, domain-specific knowledge — achieving 92% accuracy versus the unpredictable outputs of a purely generative model.

- 2Data unification is a prerequisite, not an afterthought. Consolidating 250,000+ customer records into a single queryable environment was the foundational step that made 87% autonomous resolution possible.

- 3Caching is a performance multiplier. Building Redis-based query caching into the architecture from the start was directly responsible for achieving 280ms average response times at scale.

- 4Human-in-loop design does not undermine automation — it protects it. The FAQ approval workflow maintained 92% retrieval accuracy by ensuring only validated content entered the live knowledge base.

- 5Voice should not be a Phase 2 afterthought. Integrating voice AI from day one allowed the platform to achieve 99.9% voice reliability without architectural rework later.

- 638 MCP tools were required to manage the full integration surface. Enterprise AI customer service automation is not a single product — it is a coordinated system of specialized components working in concert.

- 7$2.1M in annual revenue uplift confirms that AI customer service automation is a revenue-generating investment, not merely a cost-reduction exercise.

Lessons Learned: What We'd Replicate and What We'd Refine

The phased implementation approach was one of the most valuable structural decisions made in this engagement. By validating each layer — data foundation, ML pipeline, optimization — before advancing, the team avoided the compounding integration debt that frequently derails large-scale AI deployments. Every phase completed with a clear performance benchmark tied to production targets. This discipline is what allowed the platform to reach 99.2% uptime from day one of full operation rather than spending weeks debugging a tangled simultaneous rollout.

One area flagged for refinement in future enterprise deployments: the timeline allocated to transcript data preprocessing. Unstructured transcript data required more intensive cleaning and normalization than initial scoping anticipated. Future engagements of this scale will front-load data audit and cleaning as a formal pre-phase activity, ensuring the ML pipeline receives consistently formatted inputs from the earliest training runs. This adjustment would accelerate time-to-accuracy for the FAQ extraction model without sacrificing the 92% retrieval accuracy benchmark achieved here.

The decision to deploy 38 MCP tools rather than rely on a monolithic integration layer also proved its worth under load. When individual workflow components experienced edge-case failures — as all production systems eventually do — the modular architecture allowed targeted fixes without taking down the entire pipeline. The circuit breaker pattern enforcing rate limits on daily workflow executions (3,000–6,000) prevented any single traffic spike from cascading into broader system instability. These are patterns BFM now treats as non-negotiable in enterprise AI deployments.

Is AI Customer Service Automation Right for Your Platform?

Not every business is at the same readiness level for automated customer support. The enterprise deployment profiled in this case study succeeded in part because the underlying data infrastructure — 250,000+ unified customer records, structured transcript pipelines, a clearly defined knowledge domain — was mature enough to support RAG at scale. Organizations attempting to deploy an AI FAQ bot on top of fragmented or low-quality data will see diminished returns. Data readiness is not a technical nicety; it is the primary determinant of resolution rate performance.

That said, the threshold for meaningful impact is lower than most enterprise teams assume. If your platform is handling 300–500 daily customer inquiries with significant repetition across FAQ topics, the economic case for AI customer service automation is straightforward. The combination of 80% manual FAQ reduction, 87% autonomous resolution, and $2.1M annual revenue uplift demonstrated in this case study reflects what a well-architected deployment can achieve — not a theoretical ceiling. The ceiling is determined by your data quality, your inquiry taxonomy, and the quality of your implementation partner.

You're ready for AI customer service automation if...

The Challenge

Your team handles hundreds of daily inquiries with significant FAQ overlap and limited capacity to scale headcount.

Our Solution

A RAG-powered AI chatbot deployment with semantic search, voice integration, and human-in-loop quality assurance.

- +87% autonomous resolution potential

- +80% reduction in manual FAQ workload

- +$2.1M annual revenue uplift benchmark

You need data foundation work first if...

The Challenge

Customer records are fragmented across systems, transcript data is unstructured or inconsistently formatted, and the knowledge base lacks clear taxonomy.

Our Solution

Begin with a data unification and knowledge architecture engagement before deploying AI automation layers.

- +Establishes the 250,000+ unified record foundation required

- +Ensures 92% RAG accuracy from first production queries

- +Reduces implementation risk and timeline overruns

Technology Stack

Frequently Asked Questions

AI customer service automation uses machine learning and natural language processing — often via Retrieval-Augmented Generation (RAG) — to understand customer questions, retrieve accurate answers from a knowledge base, and respond without human intervention. For e-commerce platforms, this means handling order inquiries, return policies, shipping questions, and product FAQs automatically. In this case study, the system resolved 87% of all inquiries autonomously at an average response time of 280ms.

When properly trained and maintained, an AI FAQ bot can match or exceed human consistency on structured, repeatable questions. The RAG system deployed in this case study achieved 92% accuracy on knowledge retrieval, with a 99.2% system uptime. Human agents were reserved for edge cases, escalations, and complex problem-solving — the tasks that genuinely require judgment.

The system in this case study was designed to handle 300–500 daily customer inquiries with room to scale further using the same infrastructure. The dual-instance architecture separated processing from orchestration, enabling high-volume intake without degrading response quality or speed.

Results vary by implementation quality and inquiry volume, but this enterprise deployment generated $2.1M in annual revenue uplift. The primary drivers were reduced operational overhead, faster inquiry resolution improving conversion, and the ability to reallocate staff to revenue-generating activities rather than repetitive FAQ handling.

This enterprise implementation was completed across three structured phases covering approximately 18 weeks total, from initial infrastructure setup through performance optimization and scaling. Smaller deployments with less complex data environments may move faster, while highly regulated or deeply integrated platforms may require additional time for compliance and testing.

A production-grade deployment requires a structured customer record database, a vector store for semantic search (such as PGVector), a caching layer for performance, and an orchestration layer for workflow automation. This platform unified 250,000+ customer records as the foundation for RAG-powered responses, with 38 MCP tools deployed to manage integrations across the full stack.

Yes. This implementation included a voice AI layer achieving 99.9% voice reliability, allowing customers to interact via spoken queries that were processed through the same RAG knowledge base as text-based chat. Voice commerce and voice support are rapidly maturing capabilities within enterprise AI customer service automation.

Related Case Studies

Ready to achieve similar results?

Get a custom growth plan backed by AI-powered systems that deliver measurable ROI from day one.

Start Your Growth Engine