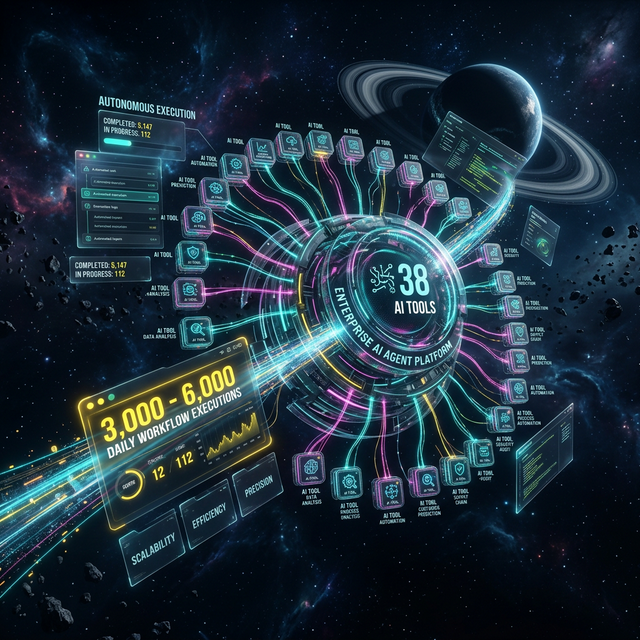

AI Business Automation at Enterprise Scale: 38 AI Tools Running Daily Operations Autonomously

$2.1M

Annual Revenue Uplift

38

AI Tools Deployed

TL;DR

A large-scale enterprise e-commerce platform deployed 38 AI tools through Blitz Front Media, creating a fully autonomous operational backbone that processes 3,000-6,000 workflow executions daily. The initiative unified 250,000+ customer records, achieved 99.2% system uptime, cut manual tasks by 85%, and delivered $2.1M in annual revenue uplift alongside a 300%+ operational ROI.

The Challenge: A Fragmented Enterprise Operating on Manual Effort

A large enterprise e-commerce platform serving hundreds of thousands of customers had reached an operational ceiling. Despite significant transaction volume and a broad customer base, the platform's core business processes were running on a patchwork of disconnected systems — an ERP platform, an e-commerce storefront, and more than a dozen legacy point-of-sale systems — none of which communicated reliably with the others. Customer data lived in silos. Order reconciliation required manual intervention. Inventory signals arrived late. And the team was absorbing over 40 hours of manual data reconciliation work every single week.

The business had the scale to justify enterprise AI automation but lacked the architecture to support it. There was no unified customer intelligence layer, no workflow orchestration engine, and no mechanism for autonomous decision-making across systems. Every customer interaction, every order, and every data sync required a human in the loop. As transaction volumes grew and customer expectations for real-time responsiveness rose, it became clear that the existing model was not just inefficient — it was a strategic liability. The platform needed a fundamentally different operational foundation built around autonomous AI business automation.

Disconnected Data Systems

The Challenge

Customer records fragmented across ERP, e-commerce, and 15+ legacy POS systems with no unified view

Our Solution

Unified data architecture consolidating 250,000+ customer records into a single intelligence layer

- +Single source of truth for all customer data

- +Eliminated cross-system data inconsistencies

- +Enabled AI-driven personalization at scale

Manual Process Overload

The Challenge

40+ hours per week spent on manual data reconciliation across disconnected platforms

Our Solution

38 AI tools deployed to autonomously handle routine operational tasks end-to-end

- +85% reduction in manual task volume

- +Staff reallocated to higher-value work

- +Zero-latency processing replacing human queues

Scalability Ceiling

The Challenge

Manual workflows unable to keep pace with growing transaction and customer interaction volumes

Our Solution

AI agent platform processing 3,000-6,000 daily executions with 280ms average response time

- +Elastic capacity matching daily demand

- +99.2% system uptime

- +Consistent performance across peak periods

Customer Service Bottleneck

The Challenge

High-volume customer inquiries requiring manual resolution, limiting response speed and consistency

Our Solution

RAG-powered knowledge base with 92% ML accuracy automating 80% of FAQ interactions

- +87% autonomous resolution rate

- +Instant responses replacing queue wait times

- +Consistent answer quality at any volume

Key Metrics: What 38 AI Tools Delivering at Enterprise Scale Looks Like

AI Tools Deployed

Daily Workflow Executions

System Uptime

Average Response Time

Annual Revenue Uplift

Operational ROI

Manual Task Reduction

Autonomous Resolution Rate

RAG Accuracy

FAQ Automation Rate

Task Completion Rate

Customer Records Unified

Our Approach: Building an AI Agent Platform That Runs the Business

Blitz Front Media's strategy was not to automate individual tasks in isolation — it was to build a connected AI agent platform that could orchestrate the entire operational lifecycle autonomously. That required designing the architecture from first principles: a dual-instance infrastructure separating orchestration from processing, a centralized MCP server housing all 38 AI tools, a RAG-powered intelligence layer for customer-facing interactions, and a workflow engine capable of sustaining thousands of daily executions without degradation. Security, uptime, and data integrity were non-negotiable constraints built into the design from day one.

The approach was phased deliberately. Rather than deploying everything simultaneously and absorbing the integration risk, the team built the infrastructure foundation first — hardening security, validating the container architecture, and confirming database persistence before a single production workflow went live. Each subsequent phase layered on top of a proven base, ensuring that by the time the full suite of 38 AI tools was operational, every component had been validated in context. This sequenced methodology is what allowed the platform to achieve 99.2% uptime from the outset rather than earning it through a long stabilization period.

*Key Takeaways

- 1Design for autonomy from the start — not as a retrofit to existing manual processes

- 2A centralized AI agent platform with 38 tools provides operational coverage that point solutions cannot replicate

- 3Phased deployment reduces integration risk while maintaining momentum toward full automation

- 4Security hardening and uptime guarantees must be architectural requirements, not afterthoughts

- 5RAG-powered intelligence layers are the key to achieving 92% accuracy in customer-facing AI automation

- 6Unified customer data (250,000+ records) is the prerequisite for meaningful AI personalization and analytics

Implementation Deep Dive: Four Phases to Autonomous Operations

Before & After

Manual Task Volume

Before

40+ hours/week of manual data reconciliation

After

85% reduction in manual processing requirements

85% reduction

Customer Records Access

Before

Siloed across ERP, e-commerce, and 15+ legacy POS systems

After

250,000+ records unified in a single intelligence layer

250,000+ records consolidated

Customer Service Resolution

Before

Manual resolution required for all customer interactions

After

87% autonomous resolution rate, 80% FAQ automation

87% autonomous resolution

Workflow Execution Capacity

Before

Manual processes unable to scale beyond limited daily transaction volumes

After

3,000-6,000 automated executions daily at 280ms average response time

3,000-6,000 daily executions

System Reliability

Before

Inconsistent uptime due to fragmented point-to-point integrations

After

99.2% uptime across all enterprise infrastructure

99.2% uptime achieved

Operational ROI

Before

No measurable ROI from automation — fully manual cost center

After

300%+ operational ROI with $2.1M annual revenue uplift

300%+ ROI delivered

Phase one established the production infrastructure — a containerized dual-instance deployment separating the orchestration layer from the processing layer, backed by a PostgreSQL database with vector extensions for semantic search capabilities. Security was hardened end-to-end: encrypted connections, IP restrictions, zero-credential deployment patterns, and comprehensive audit logging. This phase concluded with a validated production environment achieving 99.2% uptime before any business workflows were introduced. A Redis caching layer was integrated to manage workflow state at scale, and a monitoring stack was stood up to provide real-time health visibility across all components.

Phase two brought the MCP server online — the nerve center of the entire AI agent platform. This is where all 38 AI tools were registered, configured, and made available for workflow orchestration. The MCP architecture enabled complete workflow lifecycle management: creating, reading, updating, and deleting workflows programmatically, validating node configurations before deployment, and providing intelligent guidance that accelerated integration development significantly. Production-ready templates for common integration patterns were built and catalogued, giving the team a reusable library of proven workflow components rather than building from scratch for each new use case.

Phase three deployed the production workflows themselves — six operational pipelines connecting the ERP system, e-commerce platform, and internal data systems. These workflows handled order creation, customer lookup, inventory synchronization, and real-time data transformation across system boundaries. An event-driven architecture ensured that triggers fired within milliseconds of source events, and a comprehensive error handling framework with intelligent retry logic and graceful degradation prevented individual workflow failures from cascading into system-wide disruptions. The result was a live production environment processing 3,000-6,000 daily executions with a 94% task completion rate.

Phase four focused on optimization and long-term operational health. The Bull queue architecture was implemented for asynchronous, distributed job processing — enabling the platform to absorb traffic spikes without queuing delays. A comprehensive documentation system covering all operational aspects of the AI platform was finalized, giving the internal team full visibility into how each of the 38 tools functioned and how workflows could be maintained and extended over time. By this phase, the system was averaging a 280ms response time across all workflow executions — enterprise-grade performance by any measure.

Technical Architecture: How 38 AI Tools Coordinate at Scale

The technical architecture that powers this AI business automation platform operates across three distinct layers. At the infrastructure layer, a containerized dual-instance deployment separates concerns: the orchestration layer manages workflow logic and state, while the processing layer handles the actual execution workloads. Redis provides in-memory caching for workflow state and session data, while PostgreSQL with vector extensions stores both relational data and the high-dimensional embeddings that power the RAG intelligence layer. This separation of concerns is what enables the system to maintain 99.2% uptime even during peak execution periods.

At the intelligence layer, a Retrieval-Augmented Generation system achieves 92% ML accuracy by combining a curated knowledge base with large language model processing. When customer queries arrive — whether through voice agents or digital channels — the RAG system retrieves semantically relevant context before generating responses, ensuring answers are grounded in accurate product, policy, and account information rather than model hallucination. This architecture is what enables the platform to automate 80% of FAQ interactions with confidence, contributing directly to the 87% autonomous resolution rate across all customer-facing interactions.

-Before AI Business Automation

- -40+ hours per week spent on manual data reconciliation

- -Customer records siloed across ERP, e-commerce, and 15+ legacy POS systems

- -No autonomous customer service resolution capability

- -Manual workflows unable to scale with growing transaction volumes

- -Integration development requiring specialized technical expertise and long timelines

- -No unified view of 250,000+ customer records

+After Deploying 38 AI Tools

- +85% reduction in manual task volume — staff freed for strategic work

- +250,000+ customer records unified into a single intelligence layer

- +87% autonomous resolution rate across all customer interactions

- +3,000-6,000 daily workflow executions with 280ms average response time

- +38 MCP tools enabling rapid workflow creation and management

- +94% task completion rate with intelligent error recovery

Results & Business Impact: The Case for Autonomous Operations

The operational results of this AI agent platform deployment validate the business case for autonomous operations at enterprise scale. The platform now processes between 3,000 and 6,000 workflow executions every single day — consistently, reliably, and with a 280ms average response time that meets enterprise SLA requirements. System uptime holds at 99.2%, meaning the platform is available and performing across virtually every hour of every day, including peak periods that would have overwhelmed the legacy manual processes. The 94% task completion rate across production workflows confirms that the system is not just running — it is completing work accurately.

From a financial perspective, the deployment generated $2.1M in annual revenue uplift — driven by optimized operations, improved customer experience consistency, and the ability to serve customers at a scale and speed that was previously impossible. The operational ROI reached 300%+, reflecting not just cost savings from the 85% manual task reduction but also the revenue gains enabled by AI-powered customer intelligence. When 250,000+ customer records are unified and accessible to AI systems in real time, the platform can deliver personalized experiences and predictive insights that directly influence purchasing behavior and retention.

Implementation Timeline

Infrastructure Setup & Security Hardening

8 weeksEstablished production-ready containerized infrastructure with dual-instance architecture separating orchestration and processing layers. Deployed PostgreSQL with vector extensions, Redis caching, and comprehensive monitoring. Implemented full security hardening including encrypted connections, IP restrictions, and zero-credential deployment — achieving 99.2% uptime before any production workflows were introduced.

MCP Server Integration & 38 AI Tool Deployment

10 weeksBuilt and deployed the centralized MCP server housing all 38 AI tools for comprehensive workflow management. Implemented complete workflow lifecycle management (create, read, update, delete), intelligent node discovery and validation, and a production template library for common integration patterns. This phase established the control plane governing all autonomous operations.

Enterprise Workflow Development & Production Launch

12 weeksDeveloped and deployed six production workflows connecting the ERP system, e-commerce platform, and internal data systems. Implemented event-driven architecture, comprehensive error handling with intelligent retry logic, and real-time data transformation across system boundaries. Achieved 3,000-6,000 daily executions with a 94% task completion rate at launch.

Performance Optimization & Operational Documentation

6 weeksImplemented Bull queue architecture for distributed asynchronous job processing and tuned the full stack to achieve a 280ms average response time. Finalized a comprehensive documentation system covering all 38 AI tools and operational workflows. Validated $2.1M annual revenue uplift and 300%+ operational ROI through verified performance metrics.

Annual Revenue Uplift

Operational ROI

Autonomous Resolution Rate

Task Completion Rate

FAQ Automation Rate

Customer Records Unified

Success Story: Operations Director Perspective

“Before this deployment, our team was spending an enormous amount of time every week just keeping systems synchronized. Now those same workflows run autonomously — 3,000 to 6,000 times a day — and our team is focused on growth initiatives instead of data reconciliation. The 85% reduction in manual tasks was transformative, but what really changed the business was having a single, accurate view of every customer. The AI tools don't just automate tasks; they make our entire operation more intelligent.”

— Operations Director, Enterprise E-Commerce Platform, Central US

Key Takeaways: What Enterprise AI Automation Actually Requires

*Key Takeaways

- 138 AI tools operating through a centralized MCP server architecture is what makes true autonomous business operations achievable — not individual point solutions

- 2A 99.2% uptime target must be an architectural requirement from day one, not an aspirational SLA negotiated after deployment

- 3RAG-powered intelligence achieving 92% accuracy is the foundation of trustworthy customer-facing automation — accuracy enables autonomy

- 4Unifying 250,000+ customer records before deploying AI is not optional — fragmented data produces fragmented AI outcomes

- 5An 85% reduction in manual tasks is achievable when workflows are designed to be autonomous end-to-end, not just partially assisted

- 6The 280ms average response time proves that enterprise-scale AI automation does not require a trade-off between volume and speed

- 7$2.1M in annual revenue uplift reflects the compounding effect of automation across customer service, operations, and data intelligence simultaneously

- 8Phased implementation with validated infrastructure at each stage is what separates a 300%+ ROI outcome from a costly integration failure

Lessons Learned: What We Would Tell Any Enterprise Considering AI Automation

The most important lesson from this deployment is that the infrastructure decision determines everything downstream. Organizations that try to bolt AI tools onto fragmented system architectures find that automation amplifies the fragmentation rather than resolving it. The decision to build a unified data layer first — consolidating 250,000+ customer records before deploying any customer-facing AI — was what allowed the RAG system to achieve 92% accuracy rather than producing unreliable outputs from inconsistent source data. Data quality is not a prerequisite that AI can compensate for. It is the prerequisite that AI requires.

The second critical lesson is that 38 AI tools require a governance architecture — a centralized control plane that gives operators visibility into what each tool is doing, why, and with what outcomes. The MCP server approach solved this by creating a single interface through which all 38 tools were managed, validated, and monitored. Without that centralization, organizations risk deploying AI that operates as a black box, making the 94% task completion rate impossible to verify and the 87% autonomous resolution rate impossible to trust. Governance is not bureaucracy in the context of enterprise AI automation — it is the mechanism that makes autonomous operations reliable enough to actually depend on.

Organizations with Fragmented Data Systems

The Challenge

AI cannot produce reliable outputs from inconsistent, siloed source data across multiple legacy systems

Our Solution

Unify all customer records into a single intelligence layer before deploying any AI automation tools

- +92% RAG accuracy becomes achievable

- +Personalization works correctly at scale

- +AI decisions are grounded in complete data

High-Volume Customer Service Operations

The Challenge

Manual resolution cannot scale to meet enterprise interaction volumes without quality degradation

Our Solution

Deploy RAG-powered autonomous resolution targeting 80%+ FAQ automation and 87%+ overall resolution rate

- +Instant responses at any volume

- +Consistent answer quality

- +Human agents freed for complex cases

Multi-System Enterprise Integration

The Challenge

Point-to-point integrations between ERP, e-commerce, and POS systems create chronic synchronization failures

Our Solution

Centralized workflow orchestration engine with event-driven architecture and intelligent error recovery

- +94% task completion rate

- +3,000-6,000 daily executions without manual oversight

- +99.2% uptime across integrated systems

AI ROI Justification at Board Level

The Challenge

Enterprise leadership requires measurable financial outcomes before committing to large-scale AI infrastructure investment

Our Solution

Phased deployment with verified metrics at each stage, targeting 300%+ operational ROI and $2.1M revenue uplift

- +Clear financial case at each phase gate

- +85% manual task reduction reduces operating costs immediately

- +Revenue uplift validates strategic AI investment

Frequently Asked Questions About Enterprise AI Business Automation

Enterprise leaders evaluating AI business automation consistently ask the same questions: How many AI tools do we actually need? What does autonomous operations really mean in practice? How do we verify the ROI? The answers from this deployment are specific and measurable. The platform operates 38 AI tools through a centralized agent architecture, processes 3,000-6,000 executions daily with a 94% task completion rate, and generated $2.1M in annual revenue uplift alongside a 300%+ operational ROI. The FAQ section below addresses the questions we hear most frequently from enterprise teams considering a similar transformation.

Technology Stack

Frequently Asked Questions

Deploying 38 AI tools means each major operational function — from order processing and customer data unification to FAQ resolution and workflow orchestration — has a dedicated, purpose-built AI agent handling it autonomously. In this case, all 38 tools operated through a centralized MCP server architecture, giving the platform comprehensive coverage of its daily operations without human intervention for routine tasks.

This enterprise e-commerce platform's AI agent platform consistently processes between 3,000 and 6,000 workflow executions per day, driven by hundreds of daily customer interactions and backend data operations. The system maintains a 280ms average response time and 99.2% uptime across that execution volume.

This deployment achieved 300%+ operational ROI alongside $2.1M in annual revenue uplift. Results vary by organization size, integration complexity, and baseline process maturity — but the primary ROI drivers here were an 85% reduction in manual tasks and an 87% autonomous resolution rate for customer interactions.

The RAG-powered knowledge base in this deployment achieved 92% ML accuracy on semantic search queries, while the system automated 80% of all FAQ interactions autonomously. The overall autonomous resolution rate across customer service interactions reached 87%.

This full deployment ran across four structured phases — infrastructure setup, MCP server integration, enterprise workflow development, and performance optimization. Organizations should plan for a multi-month engagement to achieve enterprise-grade reliability, proper security hardening, and the depth of integration needed to sustain 3,000-6,000 daily executions.

This platform unified an ERP system, a major e-commerce platform, and multiple legacy point-of-sale systems — consolidating 250,000+ customer records into a single operational layer. The workflow engine then orchestrated real-time data flow between all connected systems with a 94% task completion rate.

Yes — when properly architected. This deployment achieved 99.2% uptime with a fully hardened security framework, including encrypted connections, IP restrictions, zero-credential deployment patterns, comprehensive audit logging, and access controls. The platform operates across hundreds of daily customer transactions with enterprise-grade compliance standards.

Related Case Studies

Ready to achieve similar results?

Get a custom growth plan backed by AI-powered systems that deliver measurable ROI from day one.

Start Your Growth Engine